By Hassen Lorgat

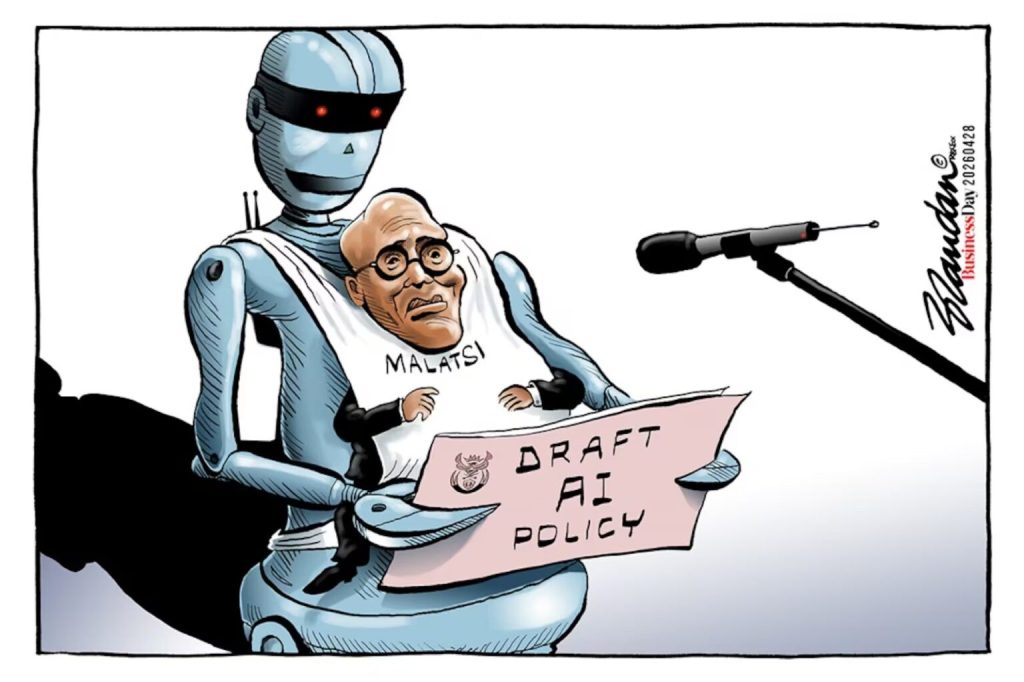

The Minister of Communications, Solly Malatsi, addressed the nation as he withdrew the Draft National AI Policy on 26 April 2026: “I am sorry. It should not have happened. I am embarrassed,” and, “I am withdrawing the Draft National Artificial Intelligence Policy. South Africa deserves better.”

A few weeks on, the initial laughter may have subsided. But the central concern remains: the underlying political economy of the policy is unlikely to change.

The withdrawn 86-page document, released on 9 April with a submission deadline of 10 June 2026 at 16h00, warned that “late submissions may not be considered.” Yet it quickly became clear that the process itself was flawed. News24 reported that the draft contained bogus academic references—so-called AI “hallucinations.” At least six of the 67 citations were reportedly generated by AI tools, and the South African Journal of Philosophy confirmed that works attributed to it did not exist.

This episode raises urgent questions—not only about the use of unregulated AI in policymaking, but about the kind of regulation that will follow. The pause created by the withdrawal offers an opportunity for alternative voices to shape the debate.

A people-driven regulation?

It is unlikely that the revised document will depart significantly from its neoliberal framing—only now stripped of fabricated footnotes. The politics remain.

For this reason, the self-organising civil society think tank, the Datacentres, Surveillance and Control Working Group, will meet in mid-May to reflect on the substance of the withdrawn paper. Our guest speaker will be Joan Kinyua, a Kenyan digital rights activist and founding president of the Data Labelers Association of Kenya (DLA). A former data labeler, she brings firsthand experience of the full spectrum of annotation work and has become a leading global voice for ethical AI and labour justice.

The struggle for worker rights and trade unionism

Kinyua’s work focuses on the often invisible and precarious workforce powering modern AI systems. She highlights the “appalling” conditions faced by data workers, and the urgent need for:

- Fair wages, reversing declines to below $1/hour in many developing countries

- Social protections, including mental health support for workers exposed to traumatic content

- Limits on intrusive surveillance and extreme digital monitoring

- The right to organise and form democratic unions

This workforce—data labelers, content moderators, and AI trainers—exists in deep precarity. As outsourced contractors, they lack basic legal and social protections. Efforts to unionise are frequently met with aggressive resistance from employers.

A critical view of “AI empires”

I first encountered Joan Kinyua’s organising work through Karen Hao, whom Kinyua frequently references for the human costs of making AI technologies available. The human costs of AI are contained in Hao’s Empire of AI, which highlights a broader systemic crisis, linking labour exploitation to several “anti-democratic” pillars reminiscent of old-style colonialism:

- Intellectual property extraction: Artists’ and writers’ work is used without consent or compensation

- Environmental strain: Data centres consume vast amounts of water and electricity, often at the expense of local communities

- Algorithmic colonialism: Labour and data from the Global South are used to generate wealth in the Global North

In essence, a small number of powerful corporations control the technology while externalising the costs—borne disproportionately by workers and vulnerable communities.

AI, war, and human rights

These concerns are not abstract. They are visible in contexts of conflict and occupation.

A strong component of the Working Group’s analysis is rooted in anti-war activism and support for South Africa’s case at the International Court of Justice regarding Gaza. AI-driven targeting and surveillance systems raise profound human rights concerns.

In Gaza and the West Bank, AI is reportedly used in both targeting and population control in the way they track, surveil and target people. The targeting systems use tools such as Lavender and The Gospel to generate targeting lists with limited human verification, whilst their tracking tools use systems like “Where’s Daddy?” to follow individuals to their homes, increasing risks to civilians. The surveillance systems at checkpoints, and places like Hebron, use facial recognition systems such as Red Wolf and Blue Wolf to monitor Palestinians without consent.

These technologies are often marketed internationally as “battle-tested,” exporting tactics developed in conflict zones to other regions. We already have some of these operational here, in particular facial recognition controls.

It is for this reason we must continue supporting the United Nations’ efforts to prohibit Lethal Autonomous Weapon Systems (LAWS). These systems—capable of selecting and engaging targets without human intervention—require enforceable international bans, not voluntary guidelines. These demands have been championed by civil society groups such as Stop Killer Robots, who correctly pointed out that: “This issue extends beyond conflict. Technologies used on the battlefield are often transferred to policing and border management, making anyone a potential victim of automated harm.”

Data centres and the AI economy

Data centres are central to the AI economy and represent a rapidly expanding global industry. Major technology corporations—including Musk’s ventures, Amazon, and Microsoft—are investing heavily in this space. AI data centres are big business. Despite endemic secrecy, it is reported that in “less than two years later, the landscape has shifted violently. We have moved from the ‘Megawatt Era’ to the ‘Gigawatt Era,’ where AI data centers consume as much power as major metropolitan cities.”

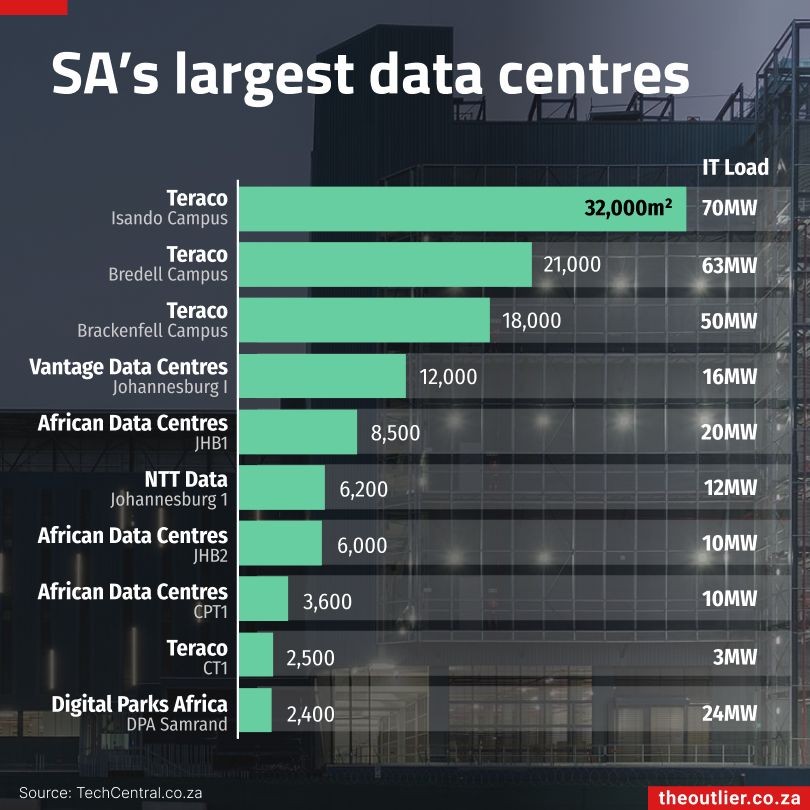

The United States hosts approximately 5,500 data centres, accounting for over 40% of global AI capacity. A global map is available here: https://map.datacente.rs/ Africa, by contrast, remains a relatively small player. South Africa has around 55 data centres which have a combined load capacity, according to The Outlier’s Alistair Otter, of roughly 350MW, but only five are AI-capable—representing a mere 1% of global AI capacity.

Government projections estimate over R50 billion in new investment over the next three years. However, this vision fails to address critical red lines. Data centres require enormous amounts of electricity and potable water—both scarce resources in a semi-arid, energy-constrained country.

The question is not whether South Africa should engage with AI, but how. We can follow familiar neoliberal models that have struggled to deliver inclusive growth, or pursue an alternative developmental path that prioritises sustainability, equity, and public interest.

Inequality and environmental strain

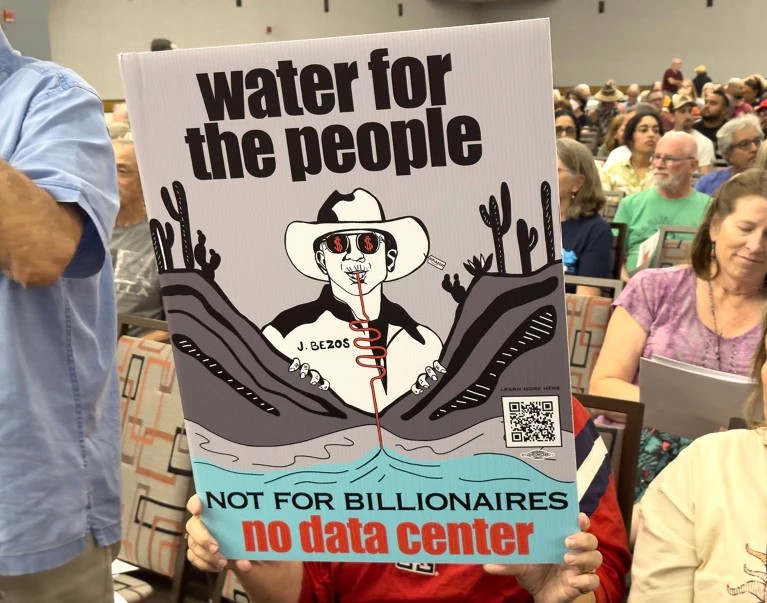

Concerns about data centre expansion are already emerging. Civil society organisations have raised issues around land use, environmental impact, and governance. Data centres are highly resource-intensive. In some cases, a small number of facilities can consume a significant share of a city’s electricity or water supply—an alarming prospect in a country already facing constraints.

Daily Maverick recently reported about the campaigning of Housing Assembly as well as Foxglove, raising many of the concerns I highlight here, including land, residential, and rezoning rights. They report that data centers are “energy hogs” because they need massive amounts of electricity to run servers 24/7 and even more water to keep those servers from overheating. Using 34% of a major city’s current supply for just four facilities is staggering, especially in a water-scarce and power-constrained country like South Africa.

What is clearly lacking is transparency and accountability to those who live and work in Cape Town, especially since a transnational multinational corporation seeks to draw 160MVA from the grid. Who are these companies? Local by all accounts, but who are their backers? In addition, whilst little is known about the real backers of projects such as those of the local Cavaleros Group, Equinix, or Teraco, we know from experience that the big boys like Microsoft are somewhere around. Furthermore, the cities that are supposed to provide the infrastructure must remember that they have a social contract with us, the citizens, and not use loopholes to evade accountability.

The real inclusion of citizens’ groups is clearly lacking. Who can tell the City of Cape Town about water? Not long ago, they had ground zero. Any such ventures must be seriously scrutinised not only by the city or the province but by the country, as unplanned migration may result. The costs of electricity and water for residents are likely to increase.

Corporations will say that they have found sophisticated new ways to use water or energy, but these have yet to be tested over a long period of time. What is most disturbing is the argument we have heard in struggles around critical raw materials: we must rush; failure to use this window of opportunity will result in us losing a very good opportunity to create jobs and uplift our people.

We have heard this logic of speed before.

Public participation is clearly insufficient. Communities—especially those with recent water crises like Cape Town—must have a decisive voice. It is only when communities are organised that they can assert their right to free, prior and informed consent and the right to say NO—rights enshrined in international human rights law and our courts.

In an interview with Radio 702’s John Perlman, Carnie argued that while AI jobs may not be labour-intensive, they represent the future. He warned that cities risk being left behind if they fail to invest, likening it to missing a fast-moving train. He concluded that short-term “pain” may be necessary for long-term gains—if efficiency improvements materialise.

But we have heard this before: tariff reductions, trade liberalisation, and broad-based black economic empowerment—all framed as pathways to growth, yet failing to deliver jobs or environmental sustainability. Furthermore, when we rush, we cannot build local or indigenous capacity and are left at the mercy of powerful groups—usually TNCs that are reaping what they have not sown.

Corporations remain adept at externalising costs, as we have seen and will continue to see and feel.

(courtesy Nature.com)

The reputable journal Nature notes that while tech giants want to move data centers to space to help the environment, the engineering challenges are too high. Space acts as a vacuum, making it nearly impossible to cool AI chips without heavy, expensive radiators. Combined with radiation and orbital junk, space-based data centers are currently impractical.

What can we learn? Transparency and accountability remain limited. While local firms such as Teraco, Equinix, and the Cavaleros Group are visible actors, global corporations are often key investors or clients behind the scenes.

Communities must have a meaningful role in decision-making, particularly in areas that have experienced water shortages or infrastructure stress. Public participation cannot be treated as a formality—it is an essential pillar of our history of struggle and the way we wanted to be governed: participatory democracy.

We must organise globally, continentally and locally

So, what principles should guide a new AI policy?

It must reclaim public power from tech corporations. AI should not simply enrich Big Tech—it must be decentralised, community-owned, and democratically governed. This aligns with calls for “algorithmic justice,” ensuring AI protects human rights and empowers workers and marginalised groups.

Communities are already organising. In Memphis, Tennessee, residents supported by the NAACP are suing xAI for what the community believes are Clean Air Act violations.

Elon Musk’s xAI operates methane gas turbines without permits to power its data centres, Colossus 1 and Colossus 2, contributing to pollution in a majority-Black city. Community members report worsening conditions despite corporate promises. This should sound familiar.

Principles to guide action

Here are some ideas of what AI principles may guide our new national policy:

- The Right to Say No (RTSN): Communities must be able to accept or reject data centre projects

- Workers’ rights to organise: Unionisation must be protected and promoted

- Opposition to corporate monopolies: AI ownership should be decentralised and democratised

- Precautionary principle: No deployment without proven safety; corporations must bear the burden of proof

- Collective ownership and democratic oversight: Transparency and accountability must replace opaque corporate control

- Community empowerment: Affected populations must shape AI development and deployment

- Algorithmic justice and equity: Systems must be designed to eliminate bias and inequality

- Global regulation of military AI: Enforceable limits on autonomous weapons systems

Beyond hallucinations

Don’t hallucinate. Organise.

AI is not neutral. It reflects the values and interests of those who design and deploy it. The challenge is to ensure it serves the many, not the few. For that to be realised, we must rediscover the art and science of democratic organising.

If South Africa’s water or energy systems are compromised in the pursuit of AI investment, will policymakers again say, “I am sorry. It should not have happened”? Or will they simply take it for the advancement of free market ideology? We cannot let this happen under our watch.

There is already sufficient evidence to make better choices. The task is not simply to move beyond AI “hallucinations,” but to confront the deeper structural issues that shape whose interests are served—and at what cost.

*Hassen Lorgat is a member of the working group on data centres, and convenor of the CSOs Tailings Working Group. He writes in his personal capacity.

Leave a comment